[BONUS] LARGE LANGUAGE MODELS ARE HUMAN-LEVEL PROMPT ENGINEERS

Paper link: https://arxiv.org/pdf/2211.01910.pdf

Brief Summary

In the last few posts, we have seen how prompt engineering has become a thing and how the quality of a prompt highly determines the quality of answer retrieved from a language model such as ChatGPT. One question that would pop into your mind is why don’t we let the LLM choose over a good prompt? This is exactly what this paper does.

Task performance of LLMs depends significantly on the quality of the prompt used to steer the model, and most effective prompts have been handcrafted by humans. Inspired by classical program synthesis and the human approach to prompt engineering, the authors propose Automatic Prompt Engineer (APE) for automatic instruction generation and selection.

In-depth

Notably, natural language prompt engineering is of particular interest in case of language models as it enables human-machine communication. However, plain language prompts may not always yield desired results, necessitating experimentation. To address this, the authors propose a novel algorithm, Automatic Prompt Engineer (APE), treating instruction generation as a natural language program synthesis problem. APE utilizes LLMs to automatically generate and select instructions, employing an iterative Monte Carlo search method. The contributions include framing instruction generation as a black-box optimization problem, introducing APE with human-level performance on various tasks, and demonstrating its applications in zero-shot learning and steering LLMs toward desired behaviors.

Imagine you have a set of examples where someone asked a question (Q) and got an answer (A). This set is called Dtrain. You also have a computer program that responds to questions; let's call it M.

The challenge is to find a single instruction (let's call it ρ) so that when you give this instruction along with a question (Q) to the program M, it gives the right answer (A). Basically, you want to figure out the best way to tell the program what to do to get the correct answers.

This is like a puzzle because, even though people have tried to do this manually, it's hard to know which instructions work best for the program. So, the solution proposed is to use a smart computer program (LLMs) to help figure this out.

The proposed algorithm, Automatic Prompt Engineer (APE), does two main things. First, it suggests a few different ways to instruct the program. Then, it uses a scoring system to pick the best instruction from the suggestions. This whole process is like a computer-guided puzzle solver to find the best way to tell the program what to do. The algorithm uses the computer's learning abilities to make this process easier.

Finding the right instruction for a program is really hard because there are so many possibilities. In the past, it has been almost impossible because the options are like searching through an infinite space. However, recent advancements in Natural Language Processing (NLP) have shown that language models are really good at creating different types of written text.

So, the idea here is to use a pre-trained language model (LLM) to help us. Instead of randomly trying out instructions (which usually won't work), we can ask the language model to guess what might be the best instructions based on examples it has seen before. It's like using the language model's knowledge to suggest a bunch of potential solutions (candidates) that we can then explore in our search for the right instruction.

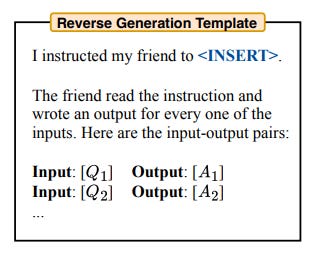

There are two approaches that the paper mentions in order to generate high quality candidates - namely Forward Mode Generation and Reverse Mode Generation.

To efficiently estimate the score of potential instructions, the approach involves a strategy to reduce computational costs. Instead of evaluating all candidates on the entire training dataset, a filtering scheme is used. Initially, all candidates are assessed with a small subset of the dataset. Those with a score above a certain threshold undergo further evaluation on new, non-overlapping subsets. This process is repeated until a small set of candidates remains, which are then evaluated on the entire dataset. This adaptive filtering significantly improves efficiency by allocating more computational resources to high-quality candidates and minimizing costs for low-quality ones. This approach optimizes the balance between accuracy and computational efficiency.

This work views large language models (LLMs) as general-purpose computers that execute programs based on natural language prompts. The approach automates prompt engineering by framing it as a black-box optimization problem, leveraging efficient search algorithms guided by LLMs. The method achieves human-level performance across different tasks with minimal human input. Given the remarkable ability of recent LLMs to follow human instructions, the authors anticipate future models, even those for formal program synthesis, to feature natural language interfaces. This research establishes a foundation for controlling and directing generative artificial intelligence.

Next week’s Paper

CLIP - Contrastive Language-Image Pretraining: Visual entailment tasks require the understanding of both the visual world and the language world. Previous works related to this involve human annotated datasets that are expensive or require expert knowledge. Hence, the data available for visual entailment is nothing as compared to the text corpora. The author in this paper argues that CLIP is a strong vision-language few-shot learner by leveraging the power of language.